A personal story about how a lifelong wish for a mathematical partner turned into a working part of my personal agent — in two days, with three open releases.

The mathematics I always wished I had

I always wanted to be a mathematician. Not in some abstract sense, but in the very concrete one: someone who feels at home in higher mathematics, who reads it between the lines and does not flinch when the formula gets heavy. That did not happen. In real life I am closer to a high-level user — an integrator who fits ready-made mathematical methods to applied problems, mostly statistical ones. I understand reasonably well when a particular method is the right tool, but I cannot always prove on my own why it works.

So for decades I have carried one quiet wish: to have a partner next to me who lives in higher mathematics the way I live in systems architecture. Someone who, when asked “does this statement really follow from those two?”, does not say “looks like it” but instead says “here is the proof — check it yourself.”

Modern generative AI has, for the first time, made that wish materially possible. Not as a chat companion, but as a component — one that lives on my server and waits for a task.

Lean 4: when a programming language exists not for code, but for truth

A few months ago I discovered a language I had known almost nothing about: Lean 4.

The description sounds odd at first. Lean is a programming language, but no one is going to write “applications” in it in the usual sense. Its real purpose is different: to write down mathematical statements formally and to prove them in a way where the correctness of every single step is checked by the compiler. If you make a mistake, your program does not run. And this is not a metaphor: the Lean compiler will literally refuse to accept a wrong proof.

This restores an old promise of mathematics — that truth is meant to be checkable. Where an ordinary paper relies on a reviewer reading with their eyes, a Lean proof either passes machine verification or it does not. There is no third option.

Around Lean a remarkable community has grown over the last few years. Mathlib — its library — now contains thousands of formalized theorems of modern mathematics, from basic algebra to differential geometry. Leading mathematicians, such as Terence Tao and Kevin Buzzard, openly translate their own and others’ results into Lean. At Google DeepMind, the AlphaProof project used Lean as the “judge” while training a model to solve IMO-level olympiad problems.

Lean is a language that does not allow itself to be wrong. Which is exactly the thing missing from an ordinary conversation with a language model.

The old problem: talking about mathematics ≠ proving it

Large language models talk about mathematics beautifully. They lay out intuition with confidence, retell the idea of a proof, supply analogous examples. The problem is that the same model will, with the same confidence, write you obvious nonsense: drop a sign, ignore a positivity condition, “prove” a false statement in the tone of a lecturer.

This is not a flaw of any specific model. It is a consequence of how they are trained: models learn to write text that is plausible, not text that is true. In mathematics, the gap between those two words is an abyss.

Lean closes that gap. If you put a compiler next to a model, you get a pair where each side does what it is good at: the model writes a draft of the proof; the compiler delivers a verdict. If the model was wrong, it gets a precise complaint from the compiler and tries again. This is the loop that has been quietly running in many academic projects for the last few years.

The remaining question was different: how do I bake that loop into my personal agent so it becomes one of her ordinary capabilities — alongside search, research, and operational work?

The last piece of the puzzle: Mistral opens Leanstral

While I was carrying that thought around, a gift of a coincidence landed on me. In April 2026, Mistral released the weights of a specialized model — Leanstral. This is not a general-purpose language model: it is a focused fine-tune trained specifically for Lean 4. On the miniF2F benchmark — a set of formalized olympiad problems that the community uses to test whether a model can actually prove things — Leanstral became the leader among open-weight models.

Suddenly all three ingredients were sitting on the same table.

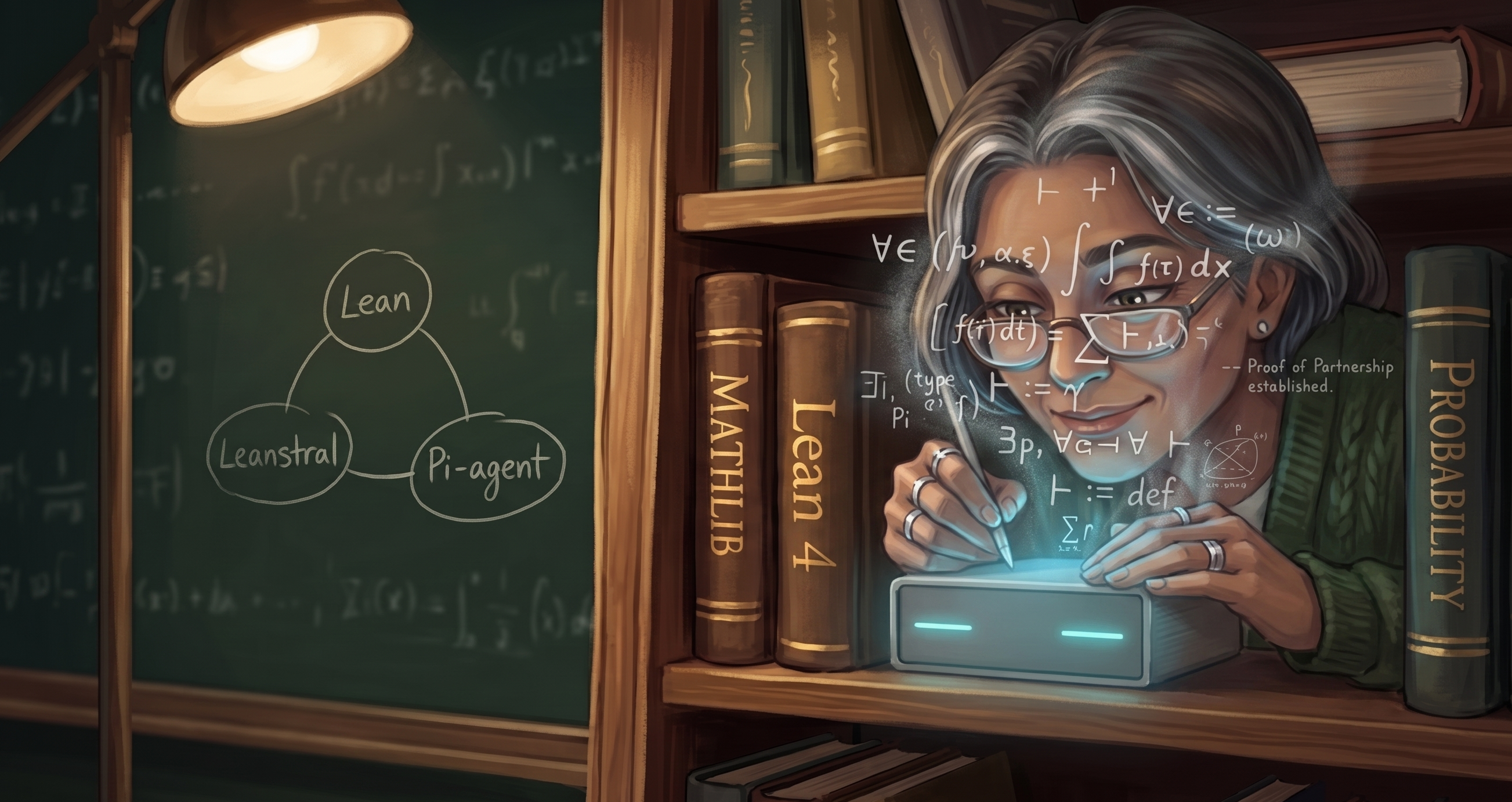

There was a language of formal truth — Lean, with its Mathlib library. There was a model trained to write that language fluently — Leanstral. And there was my agent Ayona (Aya, in Ukrainian shorthand), whose architecture was built from day one to grow by adding individual specialist helpers.

All that was left was to glue it together.

Two days with Ayona: how she gave herself the ability to prove theorems

The most surprising thing about this story is that most of the integration was done by Ayona herself.

I framed the task: “You will get a new helper. It takes a mathematical statement in natural language, rewrites it in Lean, hands it to the compiler, and returns a verdict. If the compiler refuses — it gets the error text and tries again. All of this lives next to you on the server and is invoked the same way as any other helper of yours.”

After that, Ayona worked almost on her own. On the first day she wrote the controller — a small conductor of a program that orchestrates three steps: translate natural language into Lean, call the compiler, read the verdict. On the second day she stood up the “working mathematics machine” on the server: a container with Lean 4, Mathlib, and everything needed for each new proof to be checkable in tens of seconds rather than the four minutes it takes when nothing is cached.

At the very end I plugged in Claude Code — another agent I find pleasant to “four-hands” critical architectural decisions with. Together we double-checked the default model choice, talked through what should happen if the primary model is temporarily down, and tightened a few details in the tests. To be honest, about ninety percent of the code and debugging was done by Ayona. Claude Code and I just held the safety net.

After two days, Ayona had a capability she did not have a single day before: the ability to formally prove mathematical statements.

The final architecture: Pi-agent as a universal voice for the models

The most interesting architectural decision came on the very last iteration.

At first the controller talked to a single model directly — Mistral Leanstral. It worked, but it created a bottleneck: if the model were temporarily slow or unavailable, Ayona’s ability to prove theorems would simply vanish until morning. I wanted her to have a choice.

So in the final shape of the system there appeared Pi-agent — a small “dispatcher” that speaks one common language to a number of different models and switches between them seamlessly. Technically it is a small separate service that lives next to Ayona on the same server. Conceptually, it is a universal adapter: you tell it “I need a proof phrased like this,” and it decides on its own which model is the right one to ask today.

Right now, through Pi-agent, Ayona has three tiers laid out: first comes the specialized Leanstral, since it is the strongest at writing in Lean. If Leanstral is unavailable — Ayona falls back to OpenAI (through my ChatGPT Plus subscription). If that also does not work — at the very last resort she reaches for the open large model from NVIDIA. None of this requires a single new line of code in the controller itself: the chain can be reshuffled on the fly, like the settings of a music player.

Pi-agent is not our invention. It is an open-source project by Austrian developer Mario Zechner (pi-mono), which we mounted next to Ayona as her “dispatcher brother” — separate from her core, but living in the same apartment.

Architecturally this turned out to be a remarkably clean decision. Ayona stays slim — she does not know, and does not need to know, what internally distinguishes one model from another. Pi-agent absorbs all the small details of voice: request format, authorization, switching, retries. Tomorrow a new strong model for Lean will appear — we add it to the same chain, and Ayona herself does not change at all.

The first real exam: 15 PMM2 theorems

To put the pipeline through a real test on my own skin, I picked the thing closest to my heart — the algebraic core of the Polynomial Maximization Method (PMM), developed by my scientific advisor Professor Yuriy Kunchenko (Ukrainian Wikipedia), which I implemented in R as the open-source EstemPMM package and adapted for parameter estimation in regressions and time series, with results published in arXiv:2511.07059. It is modest mathematics, but it is real: variance ratios, the efficiency function, its properties and bounds.

Ayona took the verbal statements, translated them into Lean, and ran them through the compiler. Fifteen statements were confirmed by the compiler in full — no shortcuts, no hidden assumptions. Three more remained as honestly labeled axioms: they are waiting for the parts of Mathlib where the limit theorems of probability theory will eventually be machine-verified. We did not hide those gaps under polite words — Lean would not have let us.

This is one of those rare cases where the result does not need to be re-checked by hand. If the compiler said “yes,” it said it for all fifteen at once. That is precisely the difference for which the entire story was set in motion.

What this changes

Before this integration, Ayona could talk about mathematics. She summarized papers competently, explained the intuition behind algorithms, asked sharp questions. What she could not do was prove. Every statement of the form “theorem X follows from Y and Z” had to be rechecked by hand or postponed until I could ask a living mathematician.

Now there is, on my server, the very thing I had been missing all my life: a mathematical partner with whom I can not only discuss results but actually verify them. I say: “Prove to me that for any non-empty vector of moments the smallest component of the efficiency ratio is at least one,” and within a minute I get either a finished proof in Lean or the precise place where it fails. And not once will I get a confident, smooth, but wrong sentence.

This is not a one-paper science demo. It is a working component, available every day.

Instead of a conclusion

Ayona’s architecture is built on one simple principle: she grows by adding individual specialist helpers to herself. One knows how to search the internet and our knowledge base. Another assembles presentations. Now there is one who can prove theorems. Ayona herself does not become “smarter” inside — she becomes broader in what she can do. The same way a person who finds a new co-author does not personally know more, but starts to be able to do more together with them.

I did not become a mathematician. I am not going to. But my agent — has. At least in the place where I have always wished I had someone stronger than myself. That is enough.

Українською версією статті можна почитати тут: «Як Айона за два дні стала професором вищої математики».