Trust in AI is not a feeling. It’s an engineering problem.

All articles in this series are available for free on my blog: English and Ukrainian.

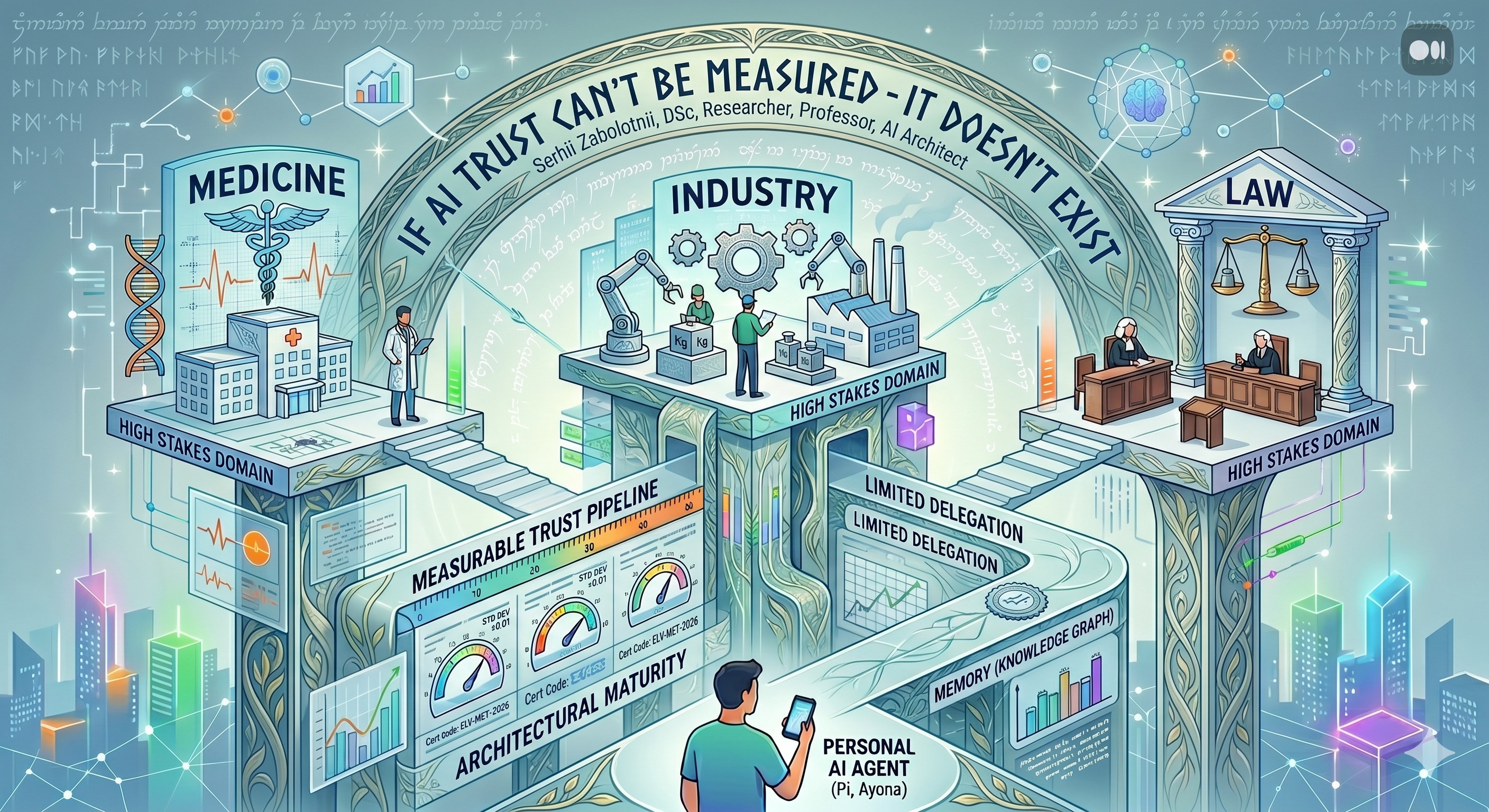

The future of agentic systems will be defined not by model capability, but by trust architecture.

How Earendil’s blog post triggered this text

I recently came across the blog of a new company, Earendil — the team that acquired Pi, a minimal and widely used open-source coding agent written by Mario Zechner. Pi is a wonderful example of craftsmanship and taste in modern software: a commitment to openness, extensibility, and code quality. Alongside the acquisition, Mario Zechner became a key stakeholder and team member at Earendil — which speaks volumes about the company’s values.

But what struck me wasn’t the technological ambition so much as the philosophy: trust in AI must be earned, not taken on faith. A system doesn’t get access to everything at once — it proves its reliability gradually, through demonstrated responsibility. Open architecture instead of a black box. Metrological measurability instead of declarative reliability.

This thought resonated with me because I recognized in it what I had been articulating for months. In a series of articles about the Ayona/OpenClaw system, I described how architecture — memory, knowledge graph, routing, bounded delegation, verification — transforms an AI assistant not merely into a working tool, but possibly into something more: a future colleague. In a specialized paper for the Special Issue on A Measure of Trust in Healthcare (currently under review), my co-authors and I argued that trust in clinical AI should not be an impression of the model, but a measurable architectural property of the system.

And here Earendil articulates essentially the same thing — but from the other side. Not from engineering, but from the culture of interaction. Not from metrology, but from the ethics of presence. This resonance became the trigger for the text you’re now reading: a reflection at the intersection of someone else’s vision and my own experience.

Pi as a cultural marker: from expert machine to cognitive presence

“Pi is written by Mario Zechner and unlike Peter Steinberger (author of OpenClaw), who aims for “sci-fi with a touch of madness,” Mario is very grounded. Despite the differences in approach, both OpenClaw and Pi follow the same idea: LLMs are really good at writing and running code, so embrace this. In some ways I think that’s not an accident because Peter got me and Mario hooked on this idea, and agents last year.” — Armin Ronacher

Pi gained significance not through benchmarks, but because it represented a different model of interaction. At its center — not power or demonstration of expertise, but gentleness, attentiveness, support, and delicate accompaniment. Pi is a cultural marker of the transition from conceiving AI as an expert machine to conceiving it as a form of sustained cognitive presence.

Earendil picks up this logic and sharpens it. In their vision, artificial intelligence is not a technical service but a problem of interaction form. What should a digital interlocutor be like so that its presence doesn’t exhaust, doesn’t substitute human will, and doesn’t create the illusion of infallible competence? Trust here is not uncritical belief in the correctness of an answer, but a property of the relationship between human and system.

From this follows an important conclusion: an affectively soft interface by itself doesn’t guarantee reliability. A caring tone can coexist with opaque logic. Therefore, the next stage after Pi is connected not with increasing the communicative attractiveness of an agent, but with achieving its architectural maturity.

Ayona: from thought experiment to real architecture

To understand this maturity, it’s useful to think through the figure of Ayona — not simply as yet another assistant, but as the figure of a trusted digital partner. Such an agent no longer exists in the mode of random responses. It’s embedded in sustained interaction, knows the user’s thinking style, maintains memory of previous decisions, helps maintain strategic direction — and at the same time must not dissolve into the temptation of omniscience.

In a series of articles about Ayona/OpenClaw, I describe how this figure transforms into concrete architecture: seven-level memory, knowledge graph as decision topology, hybrid retrieval, bounded delegation with contracts, model routing by complexity type. Ayona is not merely a thought experiment. It’s a working system through which the central problem of the next generation of agents becomes visible.

And this problem is not “intelligence” in the narrow sense. The real question: how to combine cognitive closeness with the discipline of boundaries. A good agent must be sufficiently engaged to be useful, but sufficiently restrained not to capture the space of human decision. An agent worthy of trust cannot be built merely as a powerful language model — it must be built as a system with different levels of verification, constraint, and human intervention.

When the cost of error changes everything

The transition from a personal agent to critical domains such as medicine, industry, and law is not scaling. High-risk domains change the very nature of requirements. In personal mode, harm from error is limited and correctable. In medicine, manufacturing, or law, an error becomes a practical and moral event. Therefore, an agent entering these domains must change its architectural form: clear data contracts, explicit action boundaries, escalation mechanisms, logging, and places where humans remain an irreplaceable part of the system.

Medicine: the power of restraint

In medicine, an agentic system should not imitate an omnipotent clinician. Its value lies in helping organize complexity, detecting risk in time, correlating patterns, structuring data for the physician. Trust here is built not on the charisma of a response, but on the system’s restraint: the ability to signal the limits of its applicability, show sources, separate observation from conclusion, and leave the physician in the central role of judgment. This is precisely what we are trying to substantiate in our IEEE manuscript, formulating the idea of measurable trust: trust should not be an impression, but an engineered and measurable characteristic.

Industry: embeddedness in the ecology of responsibility

In industrial environments where sensors, regulations, emergency scenarios, and human roles intertwine, an agent must be a coordinator of disciplined action. Its value lies in the ability to combine heterogeneous signals, support the operator, prevent unnecessary alarm while not concealing risk. The system must be designed so that its failures are localized and noticeable. An agent here is useful not when it’s “the smartest in the room,” but when it’s properly embedded in the already existing ecology of responsibility.

Law: where assistance ends and power begins

Jurisprudence is particularly sensitive to agentic systems because here language is always woven into power. When AI works with legal texts and precedents, every formulation can have consequences for human fates and the legitimacy of decisions. The central question is not accuracy, but the permissibility of the role. Where is the agent still structuring material, and where is it already imperceptibly programming the outcome? A legal agent should not conceal its auxiliary nature but constantly emphasize it. Its task is to help an institution think better in its own words, not to speak in its place.

Why the future belongs not to the model, but to the architecture

Across all these domains (and not only these), a common truth emerges: the fate of agentic systems will be determined not by the level of an individual model, but by the architecture in which that model is placed. The future belongs not to a universal artificial interlocutor, but to compositions where intelligence is combined with constraint, memory with discipline, and assistance with verification.

My own experience confirms this. In an AI system, reliability emerges not from a “smarter model,” but from a trust pipeline: hybrid retrieval instead of naive semantic search, scoped access instead of open access, a delegation envelope with three terminal states instead of a naked prompt, a proof loop with automatic verification. The agent of the future will perhaps resemble not a brilliant improviser, but a well-organized participant in complex human practice. Its strength is not freedom from constraints, but the ability to act within proper boundaries.

Instead of conclusions

Earendil articulates this from the side of the culture of presence. I propose approaching it from the side of trust engineering. But the conclusion is shared: the central task of the next stage of agentic AI lies not in increasing intellectual power, but in forming such structures of digital partnership in which trust is ensured not by stylistic persuasiveness, but by the organization of action itself.

If trust cannot be measured — it cannot be engineered. If it cannot be engineered — it cannot be guaranteed. And without guaranteed trust, an agent remains merely a beautiful demonstration.

This is a continuation of the article series on Ayona/OpenClaw architecture. Previous parts:

- Only a Powerful LLM Won’t Save You

- Trust Is a Pipeline

- When a Single Agent Hits Its Limits

- Ayona/OpenClaw vs Milla MemPalace

- I Finally Open-Sourced Ayona/OpenClaw

- Memory Has Architecture Too

Serhii Zabolotnii — DSc, NLP/LLM Researcher, Professor, AI Systems Architect. szabolotnii.site